The Facebook Outage of 2021

In a day and age of social media ubiquity, many people take it for granted, that it will always be there for us. Families and friends communicate with each other over these platforms, and many businesses rely on them to engage with their client base. Some businesses are fully invested in only a single social media platform to run their entire business.

That is why, when Facebook went dark on Monday, October 4, 2021, the world got shaken up. Facebook, an industry leader, captures over 71% of the global social media marketplace according to Statista. The outage, which took down Facebook, Messenger, Instagram, WhatsApp, and Oculus, impacted millions of people, and seriously hurt many small businesses. It also had a cascading effect of preventing access to other applications that leverage Facebook for authentication.

Just to illustrate how severely some small businesses were impacted during the outage, NYTimes published a statement from a small business owner: “With Facebook being down we’re losing thousands in sales,” said Mark Donnelly, a start-up founder in Ireland who runs HUH Clothing, a fashion brand focused on mental health that uses Facebook and Instagram to reach customers. “It may not sound like a lot to others but missing out on four or five hours of sales could be the difference between paying the electricity bill or rent for the month.”

An outage of this magnitude brings up many questions.

I reached out to a panel of industry leaders for their perspective and insight into what happened, and how we can learn from this incident to better prepare ourselves and prevent such a catastrophe from happening to us.

The panel consisted of the following leaders:

· Paul Barrett, Chief Technology Officer, Enterprise at NETSCOUT

· Tyler Cohen Wood — Founder, CEO MyConnectedHealth, Top 50 Global Women in Cybersecurity, and Former US Defense Intelligence Agency Cyber Deputy Division Chief.

· Scott Schober — President/CEO, Berkeley Varitronics Systems, Inc.

· Mirko Ross — CEO asvin.io.

· Chuck Brooks — President Brooks Consulting International, Adjunct Faculty in the Graduate Cyber Risk Program at Georgetown University.

· Bob Carver — Analyst in Cybersecurity, Technology, IoT, Risk Management, and Future of Work

Avrohom: Tyler, we know that Facebook went down. What exactly happened? What are the details?

Tyler: When I first heard someone say that Facebook was down, I initially thought it was a cyberattack. But the more I looked into the widespread nature of the outage, a breach seemed less likely and more probable that the outage was due to a configuration error. It was too prolific to be an external hack, with both internal and external Facebook, WhatsApp, and Instagram affected.

Avrohom: That’s a great explanation. Mirko, since your specialty is remote updates of IoT devices, why couldn’t this configuration just be corrected remotely? Why did it take so long to get services back up and running?

Mirko: Usually you go for the remote reconfiguration and restart of the router. However, in this case, the servers couldn’t be reached remotely, because the network was disabled. So, the engineers had to physically travel to a data center to do the reconfiguration and restart of the routes. That is what took so long. After that, the DNS records needed to sync again on a broader level, which is also quite time-consuming.

Avrohom: Bob, as an expert in Cybersecurity and Risk Management, what is your take on what happened?

Bob: When I heard about the recent initial Facebook Outage my first suspect was a DNS issue of some sort. I did attempt to resolve facebook.com at the command line and sure enough, DNS was unable to resolve. Later I heard there was a BGP routing issue that was tied to DNS. The response from Facebook was that it was a configuration error. That can happen if one is not careful on several fronts. However, later there was a rumor that this may have been a result of some type of cyberattack. That is a possibility, also. Native BGP is on the most secure protocol. Facebook states configuration error, however, the question would be if there was some type of mal intent in those configuration issues?

Facebook had another 2-hour outage a few days ago. Again, a configuration error is given by Facebook. Was there malicious intent involved?

Facebook needs a review on how they may be able to improve their processes and security to avoid issues like this in the future. Possibly add and an additional route to failover to if this were to happen, again.

Avrohom: You bring up a lot of great points, Bob. We had a massive outage, that was followed by an outage that occurred just a few days ago. There was a configuration issue that may have been human error or perhaps something more malicious. Paul, it sounds like the real problem was a lack of visibility across the network. What’s your take on that?

Paul: That’s exactly it. The real challenge was that there was a lack of visibility across the network. At NETSCOUT we measure networks by their level of observability.

Observability is a property that enables visibility. Observability represents the degree to which a system’s internal state can be observed and understood, either by design or via instrumentation and monitoring. When I think about outages in general, the level of observability is one of the most important considerations.

In isolation, the Facebook outage had some very specific causes for the costly outage. But from a more general perspective, this outage provides a startling example of how relatively minor errors can have far-reaching consequences. It also reinforces the enormous importance of maintaining a high level of observability in automated systems. Such observability, coupled with continuous monitoring, allows organizations to catch errors early and quickly — whether they arise from the system’s programming or exist in the fabric of the system itself.

Avrohom: Chuck, how could such a widespread outage occur in 2021? We’ve got redundant data centers, high availability servers, and many more layers of redundancy built into our business continuity plans. Shouldn’t businesses, especially large service providers, be better prepared for outages like this?

Chuck: Configuration issues are still a constant issue for most companies as their connectivity grows, and application interfaces and new programs are added to networks. Configuration issues can also lead to vulnerability targets for hackers to exploit with malware and DDOS attacks.

Avrohom: So, it seems like it’s an ongoing challenge. The bigger you grow, the more you need to protect yourself. Scott, what’s your take on this?

Scott: Facebook has over 2.8 billion monthly active users and will reach revenue estimates of $85 billion in 2021. Before this incident, Facebook was deemed by many as “too big to fail” and while a 6-hour downtime might not seem like much, it demonstrates that if simple DNS misconfiguration mistakes can lead to losses of billions of dollars, just imagine the damage that a calculated, state-sponsored hack could inflict on Facebook and everyone relying on their services. This outage has been compared to one of Facebook’s worst setbacks since 2019 when the platform was offline for nearly 24 hours because of a server configuration problem.

Avrohom: This really gives you a lot to think about. Paul, if Facebook, which has an extensive IT Infrastructure can be brought down by a simple misconfiguration, what does that mean to the rest of us who don’t have such a robust infrastructure?

Paul: The good news is that it’s not so much a matter of how robust your infrastructure is, as opposed to how well it’s managed. For example, I’ve heard countless times that IT professionals state that they’ve eliminated human error because they’re using automation. Yes, Facebook indeed uses automation, and all IT shops do so, as well. However, the vast majority of automated systems in an IT environment are driven by instructions provided by a human being via a user interface, a configuration file, or a script. All of these mechanisms allow for the introduction of human error, which is ultimately what caused this outage in the first place. Essentially, the lesson that we can learn from the Facebook outage or any outage, is the importance of observability and visibility in any automated system.

Avrohom: Downtime is extremely expensive for businesses, with costs ranging from $140k to $540k per hour! To put things into perspective, according to The Times, “the outage cost Facebook an estimated $100 million in lost online advertising sales, while its shares fell 5 percent, wiping about $40 billion from its market value.” That is a very expensive proposition. Mirko, how can ordinary businesses protect themselves from this kind of outage, and what should we be thinking about in terms of our business continuity plans?

Mirko: With regard to the specific outage that happened out Facebook, most businesses don’t have much to worry about, because BGP and route servers are used only by large corporations or ISPs. Ordinary businesses usually have no need for such kind of infrastructure. My advice to ordinary businesses would be to build resilient infrastructure. Don’t rely on a single system or network to run critical business. Always have a plan B.

Scott: Facebook’s enormous scale and market share make them an easy choice for billions of users and millions of advertisers and companies but it’s important not to invest all of your resources into a single service. Their largest competitors for ad dollars are Google and Amazon so I always recommend platform diversification to customers and small business owners, similar to what Mirko advised. Other services like WordPress and Shopify offer more independent and decentralized platforms that can withstand attacks and accidental downtime without taking down every customer in the process.

Bob: With regard to the concerns of the average business, as Mirko said, the average consumer or small business would not have control over routers and BGP configurations that are on the Internet backbone. The same with DNS providers. If they had a website, they would have to work with their website provider to get the necessary changes to get their website accessible from the Internet.

Chuck: I recommend that companies do regular vulnerability assessments that include penetration testing that can rapidly detect vulnerabilities in code and configurations. Companies, large and small, must take on the responsibility of integrating security throughout the software development lifecycle, running security checks on their applications frequently, and using multiple types of scans, through both static and dynamic analysis, to identify risks.

What should we be thinking about in terms of our business continuity plans? Business continuity is a must for everyone. In today’s cyber environment, it is not if but when you may experience a breach. The business community needs to be proactive rather than reactive and have an incident response and a plan to continue to operate already in place.

Paul: Very much like Chuck said, companies need to do regular vulnerability assessments, and at NETSCOUT we measured observability as part of that assessment.

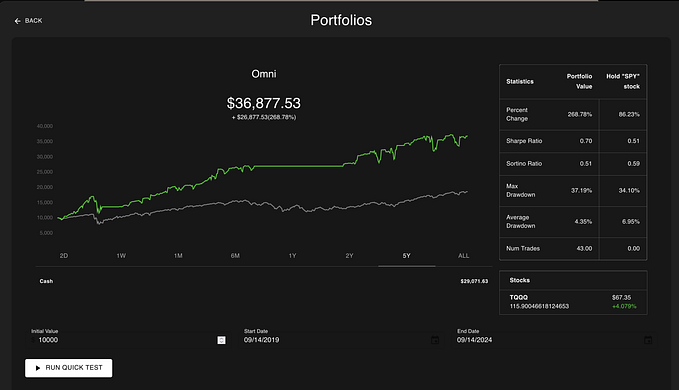

IT needs to consider all the parts in the diagram below in building a solid foundation of visibility coupled with continuous monitoring to prevent similar outages to what happened at Facebook, as well as for any other situation where a business would be impacted by downtime.

At NETSCOUT, we reinforce the need for something called independent visibility which refers to the physical network. I am not talking about tracing or log information, which is written by people. True independent visibility comes from monitoring the packets on the wire and stored on an immutable record of how the machines talk to one another.

About the Author

Avrohom Gottheil is the founder of #AskTheCEO Media, where he helps global brands get heard over the noise on social media, by presenting their corporate message using language people understand.

Avrohom presents his clients as Thought Leaders, which challenges his audience to reimagine their own mission and vision, delivering actionable insights, and leaving them passionate, motivated, and with the necessary tools to take immediate action.

Avrohom comes from a 20+ year career in IT and Telecom, where he helped businesses around the world install and maintain their communication systems and contact centers. He is a Top-ranked global expert in IoT, AI, Cloud, and Cybersecurity, followed worldwide on Twitter, and a frequent speaker on leveraging technology to accelerate revenue growth.

Listen to him share the latest technology trends, tools, and best practices for IoT, AI, Cloud, Cybersecurity, and emerging technologies on the #AskTheCEO podcast — voted as the #1 Channel Friendly Podcast in 2019 by Forrester, and #2 Podcast from Thinkers360 Thought Leaders in 2020.

Contact Avrohom:

Facebook: AvrohomGottheil

Twitter: @avrohomg

Instagram: @avrohomg